Academic literacy assisted by generative

artificial intelligence: impact on the quality of disciplinary writing

La alfabetización académica asistida

por inteligencia artificial generativa: impacto en la calidad de la escritura

disciplinaria

Dr. José Manuel de Amo Sánchez-Fortún. Universidad de Almería. España.

Dr. José Manuel de Amo Sánchez-Fortún. Universidad de Almería. España.

Dr. Kevin Baldrich Rodríguez. Universidad

de Almería. España.

Dr. Kevin Baldrich Rodríguez. Universidad

de Almería. España.

Received: 2025/02/12 Revised: 2025/11/10 Accepted: 2025/11/21 Published: 2026/01/01

How to cite:

De Amo Sánchez-Fortún, J.M.

& Baldrich Rodríguez, K. (2026). La alfabetización académica asistida por

inteligencia artificial generativa: impacto en la calidad de la escritura

disciplinaria [Academic literacy

assisted by generative

artificial intelligence: impact

on the quality

of disciplinary writing]. Pixel-Bit. Revista de

Medios y Educación, 75, Art. 2. https://doi.org/10.12795/pixelbit.113712

ABSTRACT

The present study examines the impact of integrating

generative artificial intelligence (AI) tools into the development of academic

writing skills, with a particular emphasis on disciplinary literacy and

multimodal representation as foundational pillars for the construction and

effective communication of scientific discourse. This quasi-experimental,

mixed-methods research involved 150 university students, divided into an

experimental group that exclusively used generative AI tools and a control

group that applied traditional writing strategies. The AIAS scale and the PAIR

intervention model were employed to ensure that the use of technology

complemented critical thinking processes and student authorship rather than

replacing them. Results, obtained through a validated rubric, assessed key

aspects such as textual coherence and cohesion, grammatical accuracy, proper

handling of bibliographic references, and integration of visual elements.

Significant improvements were observed across all evaluated aspects, particularly

in the ability to articulate more structured academic discourse and effectively

integrate multimodal resources. These findings underscore the potential of

generative AI not only to optimize writing processes but also to enhance

analytical skills and expand students' expressive resources in academic

contexts. The research highlights the need to establish pedagogical frameworks

to regulate its implementation, fostering critical thinking and comprehensive

education in higher education.

RESUMEN

El presente estudio examina

el impacto de la integración de herramientas de inteligencia artificial

generativa en el desarrollo de competencias de escritura académica, con un

énfasis particular en la alfabetización disciplinar y la representación multimodal

como pilares en la construcción y comunicación efectiva del discurso

científico. La investigación, de diseño cuasiexperimental y enfoque mixto,

involucró a 150 estudiantes universitarios, organizados en un grupo

experimental que utilizó exclusivamente herramientas de IAG y un grupo de

control que aplicó estrategias de composición escrita tradicionales. Se

emplearon la escala AIAS y el modelo de intervención PAIR para garantizar que

el uso de la tecnología complementara los procesos de pensamiento crítico y la

autoría del estudiante, en lugar de sustituirlos. Los resultados, obtenidos

mediante una rúbrica validada, evaluaron aspectos clave como coherencia y

cohesión textual, corrección gramatical, manejo adecuado de referencias

bibliográfica e integración de elementos visuales. Se evidenciaron mejoras

significativas en todos los aspectos evaluados, especialmente en la capacidad

para articular discursos académicos más estructurados y en la integración

efectiva de recursos multimodales. Estos hallazgos ponen de relieve el

potencial de la IAG no solo para optimizar los procesos de escritura, sino

también para fortalecer las competencias analíticas y ampliar los recursos

expresivos de los estudiantes en contextos académicos. La investigación

evidencia la necesidad de establecer marcos pedagógicos que regulen su

implementación, fomentando el pensamiento crítico y una formación integral en

la educación superior.

KEYWORDS · PALABRAS CLAVES

Generative Artificial

Intelligence; academic literacy; higher education; quality of disciplinary

writing

Inteligencia

Artificial Generativa; alfabetización académica; educación superior; calidad de

la escritura disciplinar

1. Introduction

1.1. Academic writing and disciplinary literacy

Academic writing functions as a key instrument for

integration and active participation within the scientific community of each

discipline (Biber & Gray, 2010; Carlino, 2013). Mastery of this form of

writing entails not only the capacity to communicate complex ideas clearly and

coherently, but also to contribute to the advancement of disciplinary knowledge

through discursive practices aligned with the epistemological and rhetorical

standards of each field. In higher education, such training plays a crucial role

in students’ academic success, as it supports the appropriation of the

discourses specific to each disciplinary domain and fosters autonomous,

meaningful learning.

Proficiency in writing within formal academic contexts

constitutes a complex challenge that encompasses aspects such as discursive

organisation, the appropriate use of linguistic structures associated with

formal registers, and the critical and relevant integration of bibliographic

references (McKinley, 2013). Research has shown that explicit and systematic

instruction in writing strategies contributes significantly to the development

of advanced writing competences (Fathi & Rahimi, 2024; Cassany

& Castelló, 2010). However, several factors—such

as limited time, scarce resources, insufficient teacher training, and the lack

of continuous support—hamper the effective implementation of pedagogical

practices focused on academic writing development (Jin et al., 2025). These

limitations highlight the need to explore alternative pedagogical approaches

and support tools that complement teaching practice and strengthen

teaching–learning processes in this area.

Academic production has historically been enriched

through the incorporation of alternative modes of representation—such as

images, graphs and diagrams—which, when combined with digital tools, enhance

expository clarity and contribute to more effective discursive structuring

(Kress & van Leeuwen, 2020; Díaz-Cuevas & Rodríguez-Herrera, 2024).

Multimodal writing, by integrating diverse forms of communication, facilitates

the understanding of complex concepts and promotes a dynamic interaction between

text and readers, making it a highly relevant pedagogical strategy in

educational settings (Derga et al., 2024; Walter, 2024). Within this framework,

advances in generative artificial intelligence (GenAI) have broadened the

possibilities for the revision, optimisation and enrichment of texts,

supporting the ethical and critical integration of these resources into

disciplinary literacy processes and academic training (Wang et al., 2024).

1.2. Integration of GenAI in academic writing

The incorporation of GenAI into writing processes has

been the subject of critical analysis due to its capacity to enhance discursive

cohesion, correct grammatical errors, and structure ideas in a logical manner

(Goulart et al., 2024; Acosta, 2024). Recent studies have examined different

tools (such as ChatGPT, Copilot and Gemini), highlighting their ability to

improve the organisation and clarity of texts, thereby optimising their quality

before reaching the final version (Aladini et al.,

2025; Teng, 2024).

The use of GenAI in teaching and learning requires an

approach grounded in solid pedagogical principles and appropriate regulation.

Without clear guidance, these technologies may foster dependency on automated

content generation, potentially limiting the development of key skills such as

autonomous learning and students’ argumentative capacity (García-Peñalvo, 2024;

Kalifa & Albadawy, 2024). For this reason, it is

essential to establish pedagogical frameworks that not only guide the use of

these tools but also promote metacognition and critical thinking—skills that

are crucial for enabling students to analyse, evaluate and select, in a

well-reasoned manner, the information generated by these technologies (Huang

& Teng, 2025).

Furthermore, the development of models such as the

AIAS (Artificial Intelligence Assessment Scale) has made it possible to

identify levels of use in which GenAI functions as a complementary resource

that strengthens students’ abilities without replacing them. These strategies

have proved effective in supporting more autonomous and meaningful learning,

reinforcing the importance of integrating these technologies ethically and

critically into educational processes (Perkins et al., 2024; Ayuso & Gutiérrez-Esteban,

2022). This perspective underscores the need to employ GenAI as a tool that

enriches students’ competences and fosters their overall development in

academic settings.

1.3. Benefits, challenges and ethical considerations

in the use of GenAI in higher education

The use of artificial intelligence in disciplinary

writing has shown a positive impact across multiple dimensions. Among its most

notable contributions are the optimisation of the time devoted to text

production, improvements in grammatical and stylistic accuracy, and the

mitigation of cognitive blocks that often hinder idea generation during writing

(Román-Acosta, 2023). By providing immediate and detailed feedback, these

technologies facilitate students’ autonomous detection of errors, enhancing

self-regulation processes and strengthening their confidence in written

production (Wise et al., 2024). This approach not only expands opportunities

for autonomous learning but also positions artificial intelligence as a tool

with high potential for the development of advanced competences in academic

writing.

Nevertheless, the incorporation of GenAI in

educational contexts raises the challenge of potential overreliance on these

tools, which may limit the development of fundamental skills such as

argumentation and originality in writing (Davis & Csáik,

2024; Fiorillo, 2024). This risk underscores the need to train students in the

critical use of these technologies, promoting practices that balance their

integration with the strengthening of cognitive and creative competences (Su et

al., 2024; Pigg, 2024).

From an ethical and regulatory perspective, the use of

emerging technologies raises concerns regarding model transparency and

algorithmic biases, issues that trouble the scientific community due to their

implications for fairness and reliability (Ou et al., 2024). The assistance

provided by GenAI in written composition constitutes a challenge for academic

integrity, particularly in relation to authorship attribution and the

limitations of current systems in identifying texts generated with these tools,

which complicates the detection of potential plagiarism (Casheekar

et al., 2024). In response to these concerns, regulatory proposals have been

developed that include the implementation of policies focused on the ethical

and responsible use of these technologies, together with the promotion of

digital literacy programmes incorporating principles of accountability

(García-Peñalvo, 2024). Moreover, the design of pedagogical strategies that

guide the critical and strategic use of these technologies is essential for

strengthening students’ analytical capacity during the process of reviewing and

editing AI-generated texts (García-Peñalvo et al.,

2024; Ciaccio, 2023).

2. Objectives

General objective

To analyse the impact of GenAI tools on the

development of disciplinary writing competences in university students.

Specific objectives

- To evaluate the

quality of academic texts produced with and without the use of GenAI tools,

considering dimensions such as coherence, cohesion, terminological accuracy,

argumentation, and adherence to disciplinary conventions, including

bibliographic referencing.

- To examine the

impact of GenAI tools on the different stages of the disciplinary writing

process, encompassing idea generation, planning, text structuring, revision and

editing.

- To explore

students’ perceptions regarding the use of GenAI tools in academic writing,

analysing their perceived usefulness, ease of use, and influence on confidence

and autonomy during the writing process.

- To determine the

relationship between the use of GenAI tools and the development of disciplinary

writing competences, assessing the extent to which these tools contribute to

improved argumentation, logical structuring and appropriate use of academic

language.

3. Methodology

The study adopted a mixed-methods approach

(quantitative–qualitative) and a quasi-experimental design with non-equivalent

groups, appropriate for educational contexts in which random assignment is not

feasible (Creswell, 2014; Shadish, Cook &

Campbell, 2002). The experimental group integrated GenAI tools into the

academic writing process, whereas the control group employed conventional

strategies.

The intervention was aligned with Level 3 of the AIAS

scale (Perkins et al., 2024), which defines a formative and reflective use of

GenAI. This level was selected for its relevance in educational contexts that

aim to strengthen students’ autonomy and writing competence. Within this

framework, GenAI functions as a cognitive mediator, offering feedback and

structural support without replacing authorship or critical thinking. Learning

is therefore oriented towards the development of metacognitive and discursive

competences, avoiding technological dependency.

Complementarily, the PAIR framework (Problem, AI

Selection, Interaction and Reflection) was applied as the pedagogical structure

of the intervention. This model was operationalised through work sequences in

which students (1) identified a specific writing need, (2) selected the most

suitable tool to address it, (3) interacted critically with the GenAI system by

evaluating its suggestions, and (4) reflected on the revisions made. This

process enabled GenAI to be incorporated as a dialogic resource in learning,

fostering self-regulation, critical thinking and awareness of one’s own writing

process.

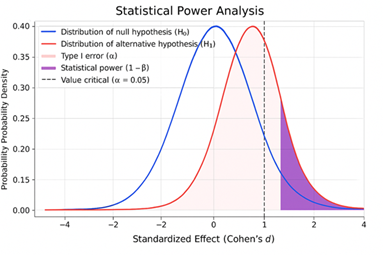

3.1. Sample

A total of 150 fourth-year students from the Primary

Education Degree at the University of Almería participated in the study (75 in

the experimental group and 75 in the control group). The sample size was

determined through a power analysis (α = 0.05, power =

0.80, d = 0.50), which confirmed its adequacy for detecting significant

differences between groups (Cohen, 1988).

The selection was non-probabilistic and based on

convenience, respecting the pre-existing organisation of the groups. Students

with prior experience using GenAI tools or those who did not complete all

phases of the study were excluded. The attrition rate (3.3%) was statistically

negligible.

Before the intervention, an initial diagnostic test

was administered, consisting of a brief academic writing task on a general

educational topic. The texts were assessed using the same rubric employed in

the study to verify the initial equivalence between groups. The results

confirmed homogeneity in writing skills (t(148) =

0.87, p = 0.382), ensuring the validity of subsequent comparisons.

Figure 1

Statistical power

analysis.

Source: own elaboration.

3.2. Study phases

The study was carried out in three phases: pre-test,

intervention and post-test.

·

Pre-test. Students were asked to produce an

argumentative essay without technological assistance (“How can AI improve

teaching and learning?”). The texts were assessed using an ad hoc rubric

composed of six dimensions: coherence, cohesion, linguistic accuracy,

argumentative strength, use of references and quality of visual elements.

·

Intervention. Over four weeks, academic writing

activities were implemented using differentiated methodologies. The

experimental group worked with tools such as ChatGPT, Copilot, Gemini,

DeepSeek, Scopus AI, Consensus, Canva and Napkin, exclusively for revising,

structuring and optimising their own texts, in accordance with Level 3 of the

AIAS scale. The control group followed traditional methods without technological mediation.

·

Post-test. Students were asked to write a new

argumentative essay (“Should the use of AI in education be regulated?”),

assessed using the same rubric. In addition, the experimental group completed a

perception questionnaire and a tool-use log (frequency, duration and type of

modifications).

3.3. Data analysis instruments

Three main instruments were used: a writing assessment

rubric, a perception questionnaire, and a log of GenAI tool use. All were

designed and validated by specialists in Language and Literature Didactics and

educational assessment.

The analysis of academic writing was conducted using a

rubric that enabled precise and consistent evaluation of the pre-test and

post-test productions. The rubric included six dimensions: textual coherence

and cohesion, grammatical and stylistic accuracy, appropriate use of

bibliographic references, quality of graphs and tables, integration of visual

elements, and academic clarity. Each dimension was rated on a Likert scale from

1 (very low) to 5 (excellent).

The instrument underwent a validation process through

expert judgement, during which specialists reviewed the clarity of the criteria

and their alignment with the study objectives. Cronbach’s alpha (α = 0.91) confirmed

a high level of internal consistency and accuracy in the evaluation.

The perception questionnaire was administered to the

experimental group to explore students’ views on the use of GenAI tools in

academic writing. It included Likert-scale items (1–5) and open-ended questions

addressing aspects such as ease of use, perceived usefulness, impact on

confidence and creativity, and challenges in technological integration.

Before administration, a pilot test was conducted with

20 students with similar characteristics to the sample but not involved in the

intervention. This phase allowed verification of item clarity and relevance,

leading to the revision of two questions. The questionnaire showed high

internal reliability (α = 0.94).

Open-ended responses were analysed through inductive

thematic coding (Braun & Clarke, 2006), carried out in three stages:

exploratory reading, open coding, and category grouping. This process

identified four main categories:

1. Facilitation of

the writing process, highlighting that GenAI helped organise ideas and improve

text structure.

2. Optimisation of

reference use, valuing the tool’s capacity to manage citations and sources.

3. Incorporation of

multimodal elements, recognising the positive impact of AI-generated graphics

and visualisations.

4. Challenges in

adapting to GenAI, referring to initial difficulties and the evaluation of the

reliability of AI-generated suggestions.

Finally, the tool-use log recorded the frequency and

duration of use of each application, as well as the functionalities employed

during the planning, drafting and revision of the essays. These data made it

possible to quantify interaction with the technology and analyse its influence

on the improvement of written production.

3.4. Data analysis

For the data analysis, SPSS software was used (IBM

SPSS Statistics for Windows, Version 28.0), applying different statistical

tests to assess the evolution of writing quality and the relationship between

the use of GenAI tools and the outcomes obtained. First, an analysis of

covariance (ANCOVA) was conducted to compare post-test scores while adjusting

for initial pre-test differences, ensuring that the effects observed were

attributable to the intervention rather than to pre-existing variations between

groups. ANCOVA was selected due to its capacity to control potential biases and

to improve the accuracy of results by reducing unexplained variability. The

assumptions of homogeneity of regression slopes and normality of residuals were

verified, ensuring the validity of the statistical model. In addition,

F-statistic and p-value results were calculated to determine the significance

of the differences identified.

Alongside the ANCOVA, descriptive analyses were

performed to characterise the frequency and duration of GenAI tool use in the

experimental group. The number of interactions with each tool, the total time

dedicated, and the specific functionalities employed were documented. To

complement the quantitative analyses, a qualitative analysis of the open-ended

questionnaire responses was carried out, enabling the identification of

patterns in students’ perceptions regarding the usefulness of the tools, the

difficulties encountered, and the impact on confidence and creativity when

writing academic texts.

The combined use of quantitative and qualitative

methods provided a comprehensive understanding of the impact of GenAI tools on

academic writing. The inclusion of ANCOVA in the statistical analysis

strengthened the reliability of the findings, ensuring that differences between

the experimental and control groups were the result of the intervention rather

than external factors. Furthermore, the validation of the instruments employed

ensured the consistency and accuracy of the data collected. This approach enabled

a rigorous determination of the impact of artificial intelligence on the

improvement of academic writing, offering both objective and subjective

evidence regarding participants’ perceptions and performance throughout the

study.

4. Results

The findings of the study show statistically

significant differences between the experimental group and the control group

across all dimensions of academic writing. The analysis of covariance (ANCOVA),

with pre-test scores included as a covariate, confirmed that the pedagogical

use of GenAI produced substantial and consistent improvements in text quality,

both in linguistic and discursive aspects as well as in multimodal components.

Table 1 presents the means, standard deviations and F-values for the post-test

in each of the evaluated dimensions.

Table 1

Comparison of

means by academic writing dimensions (post-test).

|

Evaluated dimension |

Control group (M ± DT) |

Experimental group (M ± DT) |

F |

p |

|

Coherence and cohesion |

3.5 ± 0.7 |

4.7 ± 0.5 |

52.41 |

<.001 |

|

Grammatical and stylistic accuracy |

3.6 ± 0.6 |

4.8 ± 0.4 |

58.33 |

<.001 |

|

Use of bibliographic references |

3.4 ± 0.7 |

4.7 ± 0.5 |

49.02 |

<.001 |

|

Integration of visual elements |

3.3 ± 0.8 |

4.7 ± 0.6 |

54.89 |

<.001 |

|

Academic clarity and style |

3.5 ± 0.7 |

4.8 ± 0.5 |

56.12 |

<.001 |

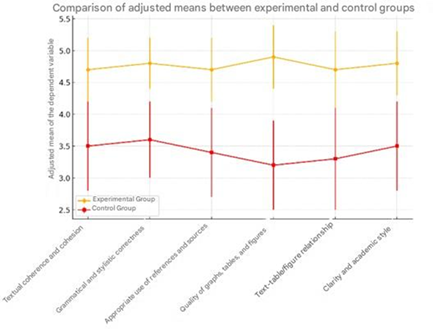

As shown in Figure 2, the experimental group presents

significantly higher adjusted means across all dimensions of academic writing,

once initial differences were controlled through the analysis of covariance

(ANCOVA).

The consistent separation between the two lines

reflects a sustained overall improvement, particularly in textual coherence and

cohesion, grammatical accuracy, and the use of references. These differences

confirm that the pedagogical integration of GenAI enhanced the discursive and

stylistic quality of the texts produced.

Figure 2

Adjusted mean

comparison between the experimental and control groups (ANCOVA).

Source: The bars

indicate the 95% confidence intervals of the adjusted means. Author’s

elaboration.

Use of GenAI tools

The activity log of the experimental group enabled the

analysis of the frequency and duration of use for each tool.

As shown in Table 2, ChatGPT and Copilot were the most

frequently used, followed by Gemini and DeepSeek. Reference management tools

(Scopus AI and Consensus) and visual design tools (Canva and Napkin) showed

moderate but consistent use, indicating a balanced integration of linguistic,

documentary and visual functions.

Table 2

Frequency and

average time of use of GenAI tools (experimental group).

|

Tool |

Mean frequency (± SD) |

Mean time (min ± SD) |

|

ChatGPT |

9.2 ± 2.1 |

125 ± 15 |

|

Copilot |

7.8 ± 1.9 |

110 ± 14 |

|

Gemini |

6.5 ± 1.6 |

95 ± 12 |

|

DeepSeek |

5.9 ± 1.8 |

85 ± 10 |

|

Scopus AI |

5.3 ± 1.4 |

75 ± 11 |

|

Consensus |

4.7 ± 1.5 |

68 ± 9 |

|

Canva |

4.5 ± 1.2 |

62 ± 8 |

|

Napkin |

3.8 ± 1.0 |

55 ± 7 |

The usage pattern shows that students employed GenAI

primarily as a support resource for revising, structuring and optimising their

texts, in line with Level 3 of the AIAS scale, which promotes a formative and

reflective use of technology.

Perceptions and qualitative analysis

The perception questionnaire administered to the

experimental group confirmed a broadly positive evaluation of the use of GenAI

tools in the academic writing process.

Ninety-five per cent of participants considered that

the tools facilitated idea generation and organisation, 97% perceived an

improvement in grammatical and stylistic accuracy, and 93% highlighted the

contribution of visual resources to the clarity and presentation of their

texts. In addition, 89% reported that GenAI helped them manage their writing

time more effectively and meet deadlines.

The thematic analysis of the open-ended responses

identified five main categories (see Table 3), which synthesise the students’

most representative perceptions.

Table 3

Synthesis of

qualitative categories, evidence and pedagogical guidelines.

|

Category |

Definition |

Evidence and codes |

Relevance |

Pedagogical guideline |

|

Organisation and structuring of discourse |

Use of GenAI to plan and organise ideas |

“initial outline”, “transitions”, “mind map” |

High |

Promote planning guides and metacognitive

reflection. |

|

Grammatical and stylistic improvement |

Linguistic revision and adjustment to academic

register |

“academic tone”, “terminological coherence” |

High |

Clarify the role of GenAI as support rather than

substitution. |

|

Reference management |

Search and formatting of academic sources |

“citation verification”, “APA format” |

High |

Include protocols for traceability and

reliability. |

|

Integration of visual elements |

Use of graphics and diagrams coherent with the

text |

“graphic summary”, “text–figure cohesion” |

Medium |

Design rubrics for critical reading of visual

resources. |

|

Initial difficulties in use |

Usability barriers and comprehension of outputs |

“learning curve”, “tool opacity” |

Focused |

Provide initial training and prompt templates. |

Students’ perceptions confirm that GenAI is viewed

primarily as a cognitive mediator that facilitates planning, revision and the

integration of resources, rather than as a substitute for the writing process.

Students acknowledge both the formative potential of these tools and the need

for teacher guidance and critical reflection to ensure ethical, autonomous and

informed use.

Taken together, the quantitative results, usage logs

and qualitative perceptions converge in indicating that the didactic and

reflective integration of GenAI significantly enhances university students’

writing competence. The use of AI as a cognitive mediator promotes

self-regulation, metalinguistic awareness and the ability to carry out critical

revision of one’s own text, provided that it is

embedded within pedagogical strategies that preserve authorship, autonomy and

the ethical dimension of academic learning.

5. Discussion and

conclusions

The findings of this study confirm that the

pedagogical incorporation of GenAI tools has a positive impact on the quality

of academic writing in higher education, in line with previous research

highlighting their potential to improve discursive coherence, linguistic

accuracy and the argumentative organisation of texts (Amo Sánchez-Fortún &

Domínguez-Oller, 2024; Dai et al., 2023; García-Peñalvo, 2024; Zheng et al.,

2024). The improvements observed in the experimental group—particularly in

coherence, accuracy, use of references and integration of visual

elements—demonstrate that GenAI can function as an effective cognitive mediator

when its use is framed within a structured formative approach.

The use of Level 3 of the AIAS scale and the PAIR

model (Problem, Selection, Interaction, Reflection) was decisive in ensuring a

balanced pedagogical integration of the technology. This approach allowed GenAI

to operate as a support resource for the thinking process rather than as a

substitute for academic judgement. Students retained an active role in

planning, revising and validating their texts, thus avoiding cognitive

automation. This finding aligns with the warnings of Wise et al. (2024)

regarding the risks of excessive technological dependence, which can limit

creativity and the development of critical competences if guided-use frameworks

are not established. Similarly, Perkins et al. (2024) argue that a model of

reflective integration—such as PAIR—supports student autonomy and informed

decision-making regarding the contributions of AI.

From an epistemological perspective, the findings

invite a reconsideration of the notion of academic authorship in environments

mediated by artificial intelligence. The technology does not replace the

author’s voice; rather, it puts it to the test, requiring constant

decision-making regarding what to accept, modify or discard. In this way, the

quality of the written text depends not only on the final product but also on

the critical capacity with which the human author evaluates, adjusts and

validates automated suggestions. This interaction shapes a new scenario of

textual co-production, where cognitive responsibility and process traceability

become central pillars of contemporary academic ethics.

In pedagogical terms, the integration of GenAI

supported the acquisition of metacognitive skills. Students not only improved

discursive organisation and textual cohesion—as noted by Teng (2024) and Ou et

al. (2024)—but also developed greater awareness of their own linguistic and

structural decisions. This reflective dimension is key to preventing cognitive

dependence and consolidating critical academic literacy. Teaching students to

distinguish between what the tool suggests and what disciplinary criteria validate

therefore becomes a core competence in higher education.

The study also showed a positive impact of GenAI on

the use of academic references. Information retrieval and management tools

enhanced the precision and reliability of citations, facilitating the

construction of more robust and well-documented arguments. Recent research

confirms this potential of AI to optimise the search and processing of sources

(Dabis & Csáki, 2024; Goulart et al., 2024), although—like the present

study—it also warns of the need for systematic verification and ethical

training in the evaluation of bias and algorithmic opacity. In this sense,

digital literacy at university level must include the teaching of validation

and traceability protocols for AI-generated information.

In the field of multimodal writing, the results

indicate that the incorporation of visual and graphic elements—facilitated by

tools such as Canva or Napkin—not only enriched the presentation of texts but

also strengthened their argumentation by offering complementary representations

of concepts. This finding supports multimodality theories that highlight the

integration of different modes of representation as an essential component of

contemporary academic discourse (Kress & van Leeuwen, 2020; Xu et al., 2022).

Thus, university literacy expands into a digital and multimodal dimension that

redefines the relationship between text, image and knowledge.

From the students’ perspective, GenAI was perceived as

useful and accessible, although it required initial training for optimal use.

This result is consistent with Ayuso-del Puerto and Gutiérrez-Esteban (2022)

and García-Peñalvo et al. (2024), who emphasise that the effectiveness of

educational technologies largely depends on users’ digital literacy. For this

reason, the integration of GenAI in university teaching cannot be limited to

its instrumental dimension: it must be part of an educational project that

includes criteria for interpretation, ethics and reliability assessment.

Finally, the behaviour observed among participants

suggests a strategic and reflective interaction with the technology: students

adjusted and personalised the generated outputs rather than accepting them

automatically. This conscious use confirms the potential of GenAI as a

facilitator of critical thinking and self-regulation in the writing process

(Kang et al., 2023; Pigg, 2024). Moreover, the differentiated use of tools

according to the stage of the process—text-focused tools for planning and

drafting; visual tools for presentation—aligns with the findings of Díaz-Cuevas

and Rodríguez-Herrera (2024), which show that the impact of AI varies depending

on the task and the user’s purpose.

In conclusion, this study demonstrates that GenAI can

play a transformative role in higher education when incorporated within robust

pedagogical frameworks such as the AIAS scale and the PAIR model. Under these

conditions, the tools do not replace authorship or critical thinking; instead,

they amplify them. GenAI thus redefines university digital literacy practices,

orienting them towards comprehensive training that combines disciplinary

rigour, academic ethics and responsibility in the use of generative technologies.

Ultimately, learning to write with AI involves learning to think with

discernment, to engage in dialogue with technology and to uphold intellectual

autonomy in algorithm-mediated environments: the new horizon of academic

literacy in the digital age.

6. Limitations and

future directions

This study has certain limitations that should be taken into account when interpreting its findings. First,

the sample was non-probabilistic and composed of students from a single

institution, which restricts the generalisation of the results to other

educational contexts. Future research should consider incorporating larger and

more diverse samples, including students from different universities and

disciplines, in order to broaden the applicability of

the findings. Secondly, the diversity and continuous evolution of GenAI tools

represent an ongoing challenge. Although this study included representative

tools, the rapid advancement of these technologies requires continuous

evaluation to understand their impact on academic writing in an up-to-date

manner. Finally, the duration of the intervention—limited to four

weeks—prevents an analysis of whether the observed effects persist over time.

Longitudinal designs could be highly valuable for exploring the development of

writing competences over longer periods.

Funding

This research is funded by the project “Educational

Transformation: Exploring the Impact of Artificial Intelligence on University

Students’ Reading and Writing Development” (PID2023-151419OB-I00), under the

call for R&D&I Projects “Knowledge Generation”, within the State

Programme for the Promotion of Scientific and Technical Research and its

Transfer, as part of the Spanish State Plan for Scientific, Technical and

Innovation Research 2021–2023. Ministry of Science, Innovation and

Universities. Spanish State Research Agency. 2024–2027.

Conflicts of interest

The authors declare that they have no conflicts of

interest.

References

Acosta, D.R. (2024). Potential of artificial

intelligence in textual cohesion, grammatical precision, and clarity in

scientific writing. LatIA,

(2), 26. https://doi.org/10.62486/latia2024110

Aladini, A., Ismail,

S.M., Khasawneh, M.A.S., & Shakibaei,

G. (2025). Self-directed writing development across computer/AI-based tasks: Unraveling the traces on L2 writing outcomes, growth

mindfulness, and grammatical knowledge. Computers

in Human Behavior Reports, 17, 100566. https://doi.org/10.1016/j.chbr.2024.100566

Amo Sánchez-Fortún, J.M., & Domínguez-Oller, J.C.

(2024). Análisis sistémico de la alfabetización

discursiva en las prácticas académicas situadas: La escritura hipertextual en

trabajos de fin de grado. Revista de

Educación a Distancia (RED), 24(77).

https://doi.org/10.6018/red.574881

Ayuso-del P.D., &

Gutiérrez-Esteban, P. (2022). La Inteligencia Artificial como recurso educativo

durante la formación inicial del profesorado. RIED-Revista Iberoamericana de Educación a Distancia, 25(2), 347-358. https://doi.org/10.5944/ried.25.2.32332

Biber, D. & Gray, B. (2010). Challenging

stereotypes about academic writing: Complexity, elaboration, explicitness. Journal of English for Academic Purposes, 9(1),

2–20. https://doi.org/10.1016/j.jeap.2010.01.001

Carlino, P. (2013). Alfabetización académica: un

cambio necesario, algunas tensiones inevitables y ciertas estrategias posibles.

Educación en Contexto, 1(1), 1–15.

Casheekar, A., Lahiri,

A., Rath, K., Sanjay Prabhakar,

K.S. & Srinivasan, K. (2024). A contemporary review on chatbots, AI-powered

virtual conversational agents, ChatGPT: Applications, open challenges and

future research directions. Computer Science

Review, 52. https://doi.org/10.1016/j.cosrev.2024.100632

Cassany, D. & Castelló, M. (2010). Escribir y comunicar en contextos

científicos y académicos. Graó.

Ciaccio, E.J. (2023). Use of artificial intelligence

in scientific paper writing. Informatics

in Medicine Unlocked, 41, 101253. https://doi.org/10.1016/j.imu.2023.101253

Cohen, J. (1988). Statistical

power analysis for the behavioral science.

Lawrence Erlbaum Associates.

Creswell, J.W. (2014). Research Design: Qualitative, Quantitative, and Mixed Methods

Approaches. SAGE Publications.

Dabis, A., & Csáki, C. (2024). AI and ethics:

Investigating the first policy responses of higher education institutions to

the challenge of generative AI. Humanities

and Social Sciences Communications, 11(1),

1-13. https://doi.org/10.1057/s41599-024-03526-z

Dergaa, I., Chamari, K., Zmijewski, P. & Saad, H. B.

(2023). From human writing to artificial intelligence generated text: examining

the prospects and potential threats of ChatGPT in academic writing. Biology of sport, 40(2), 615-622. https://doi.org/10.5114/biolsport.2023.125623

Díaz-Cuevas, A. P. & Rodríguez-Herrera, J. (2024).

Usos de la inteligencia artificial en la

escritura académica: experiencias de estudiantes universitarios en 2023. Cuaderno de Pedagogía Universitaria, 21(42),

25-44. https://doi.org/10.29197/cpu.v21i42.595

Fathi, J. & Rahimi,

M. (2024). Utilising artificial intelligence-enhanced writing

mediation to develop academic writing skills in EFL learners: a qualitative

study. Computer Assisted Language

Learning, 1 40. https://doi.org/10.1080/09588221.2024.2374772

Fiorillo, L. (2024). Confronting the demonization of

AI writing: Reevaluating its role in upholding scientific integrity. Oral Oncology Reports, 12, 100685. https://doi.org/10.1016/j.oor.2024.100685

García-Peñalvo, F.J. (2024). Inteligencia artificial generativa y

educación: Un análisis desde múltiples perspectivas. Education in the Knowledge Society (EKS), 25, e31942-e31942. https://doi.org/10.14201/eks.31942

García-Peñalvo, F. J.,

Llorens-Largo, F. & Vidal, J. (2024). La nueva realidad de la educación

ante los avances de la inteligencia artificial generativa. RIED-Revista Iberoamericana de Educación a Distancia, 27(1), 9-39. https://doi.org/10.5944/ried.27.1.37716

Goulart, L., Matte, M. L., Mendoza, A., Alvarado,

L. & Veloso, I. (2024). AI or student writing? Analyzing

the situational and linguistic characteristics of undergraduate student writing

and AI-generated assignments. Journal of

Second Language Writing, 66, 101160. https://doi.org/10.1016/j.jslw.2024.101160

Huang, J., & Teng, M. F. (2025). Peer feedback and

ChatGPT-generated feedback on Japanese EFL students’ engagement in a foreign

language writing context. Digital Applied Linguistics, 2, 102469-102469. https://doi.org/10.29140/dal.v2.102469

Jin, F., Lin, C.H. & Lai, C. (2025). Modeling AI-assisted

writing: How self-regulated learning influences writing outcomes. Computers in Human Behavior,

165, 108538. https://doi.org/10.1016/j.chb.2024.108538

Khalifa, M. & Albadawy,

M. (2024). Using artificial intelligence in academic writing and

research: An essential productivity tool. Computer

Methods and Programs in Biomedicine Update, 5, 100145. https://doi.org/10.1016/j.cmpbup.2024.100145

Kress, G. & Van Leeuwen, T. (2020). Reading

images: The grammar of visual design. Routledge.

McKinley, J. (2013). Displaying critical thinking in

EFL academic writing: A discussion of Japanese to English contrastive rhetoric.

The East Asian Journal of Applied

Linguistics, 3(2), 133–157. https://doi.org/10.1075/eajal.3.2.04mck

Ou, A. W., Khuder, B.,

Franzetti, S., & Negretti, R. (2024).

Conceptualising and cultivating critical GAI literacy in doctoral academic

writing. Journal of Second Language

Writing, 66, Article 101156. https://doi.org/10.1016/j.jslw.2024.101156

Perkins, M., Furze, L., Roe, J., & MacVaugh, J. (2024). The Artificial Intelligence Assessment

Scale (AIAS): A framework for ethical integration of generative AI in

educational assessment. Journal of

University Teaching and Learning Practice, 21(06).

Pigg, S. (2024). Research writing with ChatGPT: A

descriptive embodied practice framework. Computers

and Composition, 71, 102830. https://doi.org/10.1016/j.compcom.2024.102830

Román-Acosta, D. D. (2023). Más allá de las palabras: Inteligencia

artificial en la escritura académica. Escritura

Creativa, 4(2), 36–43.

Shadish, W.R., Cook, T.D.,

& Campbell, D.T. (2002). Experimental

and Quasi-Experimental Designs for Generalized Causal Inference. Houghton

Mifflin.

Su, Y., Lin, Y. & Lai, C. (2023). Collaborating with

ChatGPT in argumentative writing classrooms. Assessing Writing, 57, 100752. https://doi.org/10.1016/j.asw.2023.100752

Teng, M.F. (2024). Metacognitive awareness and EFL

learners’ perceptions and experiences in utilizing ChatGPT for writing

feedback. European Journal of Education,

60, 1-16. https://doi.org/10.1111/ejed.12811

Trochim, W.M.K. (2006). Research Methods: The Essential Knowledge Base. Cengage Learning.

Walter, Y. (2024). Embracing the future of artificial

intelligence in the classroom: The relevance of AI literacy, prompt

engineering, and critical thinking in modern education. International Journal of Educational Technology in Higher Education, 21(15). https://doi.org/10.1186/s41239-024-00448-3

Wang, C., Wang, H., Li, Y., Dai, J., Gu, X., & Yu,

T. (2024). Factors influencing university students’ behavioral

intention to use GenAI: Integrating the theory of planned behavior

and AI literacy. International Journal of

Human–Computer Interaction, 1-23. https://doi.org/10.1080/10447318.2024.2383033

Wise, B., Emerson, L., Van Luyn, A., Dyson, B., Bjork,

C. & Thomas, S.E. (2024). A scholarly dialogue: writing scholarship,

authorship, academic integrity and the challenges of AI. Higher Education Research & Development, 43(3), 578-590. https://doi.org/10.1080/07294360.2023.2280195